?With the growing age of many of the world’s nuclear reactors, interfacing new engineering with old plant has become a ubiquitous feature of nuclear engineering. Working around old plant presents some serious engineering challenges; and a lot of these come down to a lack of knowledge about the existing structures. A new technology applied with great success in recent years has been 3D laser scanners. These devices do what even the best maintained drawing registries can’t hope to do; they supply true as built representations of even the oldest and most extensively modified or damaged structures.

Unfortunately, 3D laser scanning techniques cannot always be applied: scanners have exposed sensitive, high-precision optical components; they are often too large to fit in enclosed spaces or through small apertures; many are riddled with fans, cooling fins and other contamination traps; and all carry a relatively high price tag. In many situations they cannot be deployed, cannot be adequately protected, or are not economically-justified given the often high probability of loss or damage. The purpose of this article is to raise awareness of alternative solutions that are now available in the form of mobile robotics sensors.

Mobile robotics is a field of research that aims to make autonomous robots that sense and respond intelligently to their environment. Over the years, a number of providers have developed increasingly advanced sensors to enable these robots to measure the world around them. With the advent of the self-drive car, the unmanned air vehicle and motion-sensing computer interfaces, these sensing technologies are now making rapid advances, and several are mature enough to be applied in an industrial setting. Unlike the now-familiar surveyor’s laser scanner, these sensors are designed for environments that have features the nuclear engineer will recognise: they are designed to survive small collisions; they are typically sealed against dust (some are designed to be rained on or hosed down); they are small and light; and (when compared to a full laser scanner) they are cheap. There is, of course, a price to be paid; range is often short, the data is generally less accurate and/or sparser, and nice-to-have software features have typically been dropped in favour of a simple one-size fits all interface. Nevertheless, when your laser scanner simply won’t or can’t do the job, these sensors may provide a viable alternative.

There are a plethora of sensors which potentially fall into this bracket and space does not allow a comprehensive review of all such sensors, or even of every type. We focus instead on the subset of technologies that are approximately equivalent to a laser scanner and are popular (and therefore reasonably mature) in the mobile robotics field.

The first of these sensors is, perhaps unsurprisingly, the laser scanner. The basic principles behind laser range-finding are amenable to miniaturisation and a fairly wide range of sensors based on the principle are available (Figure 1). These typically vary in physical size and cost, with larger more expensive units providing both greater range and greater accuracy. Cost and size are of course relative, and even the largest, most expensive units typically cost less than £5000 and fit comfortably in a 200mm cube. Range is typically between 4m and 100m, accuracy between 10mm and 50mm, and mass between 150g and 4kg.

The big difference between these sensors and their larger counterparts is in the mechanical system; the laser scan pattern typically has a single axis or rotation that creates a two-dimensional cross-section rather than the full 3D image produced by a surveyor’s scanner. The logic behind this is that field of view is a variable in robotics applications. Some robots are happy with a cross-section of their environment so that they can avoid walls. More sophisticated robots require a full 3D image, but typically only within a limited field of view and this can be achieved by ‘nodding’ the scanner up and down. If a full 360-degree view is required, the sensor can be revolved to create a complete point cloud. These modes can also be applied to plant surveying.

An example of this type of application is an axial scanner developed and deployed in collaboration with Createc, REACT Engineering and the National Nuclear Laboratory. The device (shown in Figure 2) is small enough to be deployed through four-inch service ports into active caves, and has been demonstrated in several reprocessing cells at Sellafield. Figure 3 shows typical outputs from this scanner. Although the effect of radiation on the sensor has yet to be fully investigated, the system has successfully operated in fields of several milliseiverts per hour with no adverse effects on the data. A second, higher performance version of this device is currently being developed for NNL by Createc, which will have greater range (30m) higher accuracy and higher point density. It is hoped that this sensor may become a standard tool for operational plant inspections in the future.

Laser scanners are also suitable for integration into systems with other sensors to create multifunctional sensor packages. One example is the N-Visage™ gamma characterisation system that combines a laser scanner, spherical camera and gamma camera into a single package to provide high-detail three dimensional point-cloud models in which each point has brightness, four-channel colour (red, green, blue and infrared) and a gamma activity value. Thanks to the small size of each of the individual sensors, the entire package can be deployed through an aperture just 120mm in diameter.

A second type of sensor worth investigating is the broad category of 2D+ range sensors. These sensors act like a conventional camera but instead of (or as well as) measuring the colour and brightness of each pixel, they measure the range of the nearest surface, effectively creating a 3D image. These sensors typically have significantly lower accuracy and are sparser than laser scanners, but have three potentially very useful properties: they provide 3D ‘video’ and, unlike laser scanners, can therefore cope with moving objects; they are the smallest and lightest fully-3D sensors available; and some examples are very low-cost making them suitable for single-use applications. These types of sensors generally fall into two categories: structured light sensors, and time-of-flight sensors.

Structured light sensors infer 3D structure from the distortions observed in a carefully-selected light pattern projected from the sensor. This is the principle behind entertainment products such as the Microsoft Kinect. The low price tag and plastic construction of such devices may make them seem more like toys than serious measurement equipment, but inside lurks some impressive sensor technology. The huge price differential between these devices and laser scanners is driven far more by the enormous size of the consumer market than by the quality of the engineering; the Kinect for example became the fastest-selling consumer electronics product of all time when it sold 8 million units within two months of launch, generating revenues orders of magnitudes greater than even the most successful laser scanners. That kind of market size justifies some major development effort.

Industrial interest in consumer products with structured light sensors is already beginning to stimulate the development of third-party enhancements to address some of the basic technology’s limitations. A good example of this is ReconstructMe software from ProFactor of Austria, which enables the accuracy and completeness of 3D models built using structured light sensors to be enhanced by fusing the outputs from many individual scans together. This cancels out noise, corrects errors, fills gaps in the data and also fits a surface to the 3D model (Figure 4). This process not only produces high quality models, but also eliminates much of the human effort involved in registering and filtering point clouds. When you consider that structured light devices can typically be deployed through 50mm apertures, and can be purchased for less than the cost of the pole you screw them to the end of, it’s a wonder we have yet to see such devices being deployed regularly (if at all) in the nuclear industry.

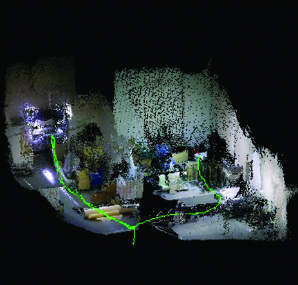

One application of structured light is currently being explored by the author in collaboration with Bluebear Systems Research, a UK specialist in unmanned air systems. The concept is an automated characterisation drone which carries a structured light 3D sensor and colour camera and a small gamma spectrometer (See Figure 5). The 3D sensor is used to simultaneously navigate the survey area and build a model of it through a process known as Simultaneous Localisation and Mapping (SLAM). As it builds its map the drone also records gamma spectrometry data, which it uses to compute the probable contamination of surfaces in the model. The objective is to create a generic characterisation technology for areas with challenging access constraints. Key applications would be mapping elevated high-dose areas such as the operations floor of the reactors at Fukushima.

Figure 6 shows the 3D model created mid-flight, which can then be used for path planning. The 3D model is combined with the gamma data in real-time using Createc’s N-Visage software to produce a 3D activity distribution map. Because the whole process is carried out in real-time, the autopilot is able to respond to what it sees, making the measurements that result in the most complete 3D model and the highest resolution activity distribution map. Active flight trials of this system will be taking place in July and we hope to see the first on-site demonstration within the year.

3D robotics sensors have developed very rapidly over the past few years, and the pace of development is accelerating with growing demand from industry. In the next few years we can expect to see significant improvements in cost and performance from these types of sensors. In particular, interest in safety-critical mass-market applications such as the self-drive car is likely to drive improvements in sensor robustness and reliability, while ever more demanding human-machine interface applications are going to drive improvements in resolution and precision.

?Matt Mellor, managing director, CREATEC Ltd, Unit 8, Derwent Mill Commercial Park, Cockermouth, Cumbria, CA13 0HT